AI-Era Interface Theory

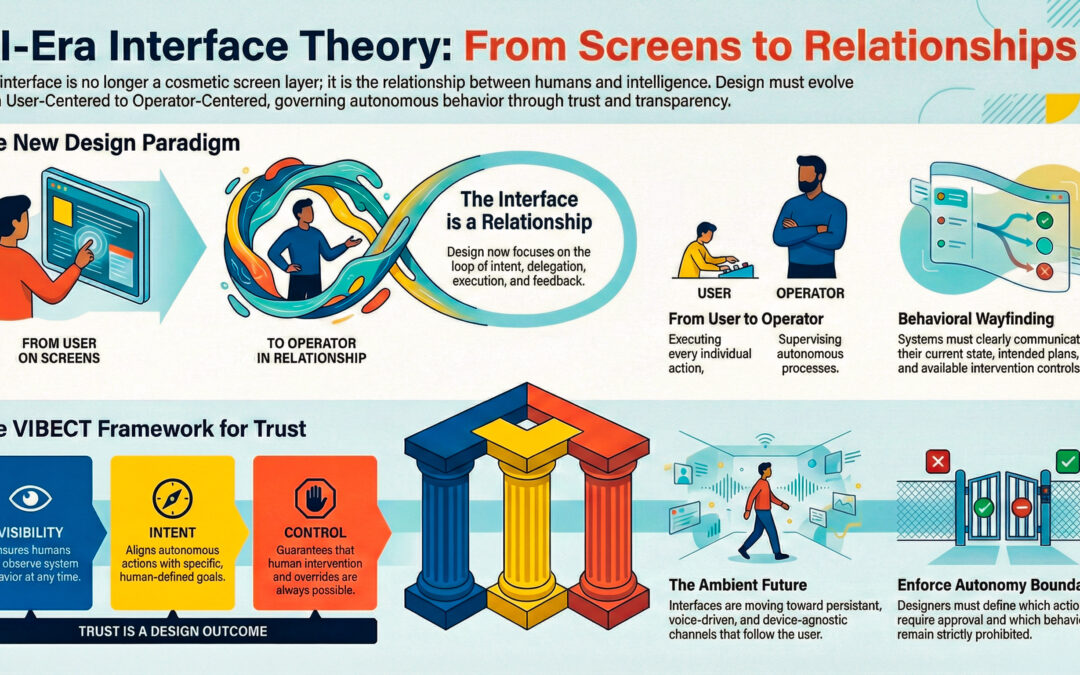

- The interface is no longer a screen — it is a relationship with intelligence

- Trust in AI is a design outcome, not a feature

- The VIBECT framework defines the conditions for trustworthy autonomy

- The future interface will be ambient, persistent, and voice-driven

- Security and Development agents form the invisible foundation of intelligent systems

Historical Reflection: Twenty Years Later

Over twenty years ago, I wrote and published a paper arguing that successful products share a common trait: good interface design—but that “interface” should never be treated as a cosmetic layer. Even then, I believed the interface was the wrong place to begin unless we were willing to treat interface design as system design.

Back then, the web was entering its “hypertext” era. Content was beginning to sprawl across pages and links, and users frequently became disoriented. The design challenge was navigation, clarity, and meaning-making—helping humans find their way through information.

Today we’re in a new transition. The disorientation is no longer just informational. It’s behavioral.

AI systems don’t simply present information; they increasingly act—completing tasks, making decisions, and executing workflows. That means the “interface” isn’t just what we see. It’s the structure that determines what a system will do, why it did it, and how a human can intervene.

This update is my attempt to connect those original principles to the AI-agent era—where trust is no longer a brand promise. It is a design outcome.

Introduction — The Interface Is No Longer a Screen

For decades, successful digital systems have shared a common characteristic: thoughtful interface design. Traditionally, the interface referred to visual layouts, navigation, and usability — the surface through which users interacted with software.

Early human-computer interaction research emphasized that effective interfaces emerge not from aesthetics alone, but from understanding human cognition, goals, and behavior (Laurel, 1991; Norman, 1988). Even in the early days of hypertext and networked systems, designers recognized that the interface represented a system of interaction, not merely a visual layer.

Today, that definition is no longer sufficient.

With the rise of artificial intelligence and autonomous systems, the interface has evolved beyond screens. The interface is now the relationship between humans and intelligent systems — the loop of intent, delegation, execution, feedback, correction, and trust.

Designing this relationship is not about appearance. It is about governing behavior, preserving human control, and building trust in autonomous intelligence.

From User-Centered Design to Operator-Centered Design

User-Centered Design (UCD) established that design must begin with user needs and goals rather than system capabilities (Norman, 1988). In AI-driven environments, this principle expands into what can be described as Operator-Centered Design, where humans supervise autonomous processes rather than directly executing every action.

This shift reflects early instructional design theory, which emphasized aligning systems with human cognitive processes and decision-making (Gagné & Merrill, 1990).

The central design questions become:

- What decisions may the system make independently?

- What actions require human approval?

- What behaviors must remain prohibited?

- What must the system explain after acting?

These questions define autonomy boundaries, the foundation of trust in intelligent systems.

The Interface as System Governance

Human-computer interaction scholars long argued that “every interface designer is a system designer” (Laurel, 1991). In autonomous environments, this observation becomes literal.

The interface now includes:

- Task routing and delegation

- Memory and persistence policies

- Tool permissions and constraints

- Escalation and intervention rules

- Failure and recovery mechanisms

Thus, interface design becomes the practice of shaping intelligent behavior within human-defined limits.

Wayfinding in the Age of Autonomy

Hypertext research identified the “wayfinding problem,” where users became disoriented navigating non-linear information (Fleming & Levie, 1993; Nielsen, 1995). Autonomous systems introduce a parallel challenge: behavioral disorientation.

Users must understand:

- What the system is doing

- Why it is acting

- What it intends to do next

- How they can intervene

To address this, modern AI interfaces require new wayfinding elements:

- State — current system understanding

- Plan — intended actions

- Provenance — information informing decisions

- Actions — completed operations

- Controls — available interventions

- Boundaries — operational limits

These elements preserve situational awareness and prevent loss of trust.

Mental Models and Metaphors for Autonomous Systems

Metaphors have historically helped users interpret unfamiliar systems by anchoring new concepts to prior knowledge (Gordon, 1994). In intelligent systems, metaphors remain essential — not for aesthetics, but for predictability.

Common useful metaphors include:

- A team of specialists

- Mission control

- Autopilot with manual override

- Structured workflow

These metaphors provide users with a predictive mental model, reducing uncertainty and cognitive load.

Prototyping Safe Autonomy

Rapid prototyping has long been central to interface design, enabling iterative improvement through early feedback (Gordon, 1995). However, autonomous systems introduce new risks, as they act rather than merely respond.

Safe autonomy requires:

- Sandbox environments

- Dry-run previews

- Stepwise approval mechanisms

- Recovery and rollback paths

- Testing under edge and misuse conditions

The question shifts from usability to predictability and safety.

Usability Becomes Trust

Traditional usability metrics remain important:

- Task completion time

- Error rate

- User satisfaction

However, autonomous systems introduce new evaluation dimensions:

- Intervention frequency

- Explanation effectiveness

- Confidence calibration

- Behavioral consistency

- Recovery from failure

Usability evolves into trustworthiness.

Accessibility in Intelligent Systems

Accessibility continues to include visual, auditory, motor, and cognitive design (Park & Hannafin, 1993), but autonomous systems extend accessibility into operational accessibility:

- Can non-experts safely operate the system?

- Can users intervene quickly under pressure?

- Can users understand system reasoning?

Accessibility becomes synonymous with preserving human agency.

Transparency and Explainability

Transparent systems communicate:

- What occurred

- What will occur

- Why actions were taken

- What evidence informed decisions

- Where uncertainty exists

- What options are available

Transparency transforms automation into collaborative intelligence, aligning with modern human-AI interaction research emphasizing explainability and trust (Amershi et al., 2019).

The VIBECT Framework — Designing Trust in Autonomous Systems

Trust in intelligent systems emerges from design. The VIBECT framework defines the necessary conditions:

Visibility — humans can observe system behavior

Intent — alignment with human goals

Boundaries — actions within defined limits

Explainability — reasoning communicated clearly

Control — human intervention possible

Trust — the resulting outcome

VIBECT reframes interface design as the governance of autonomous behavior rather than the presentation of interaction surfaces.

Conclusion — The Future Interface

The future interface is not a screen but a system translating human intent into bounded, observable, and controllable autonomous action.

As technologies evolve, the central principle remains unchanged: understanding human cognition, behavior, and goals is fundamental to effective design.

The future of artificial intelligence is not human replacement — it is human-directed intelligence.

The Ambient Interface — Beyond Screens

The next phase of human–AI interaction will move beyond laptops, keyboards, and traditional screens. Intelligent systems will increasingly be accessed through ambient interfaces — persistent, device-agnostic communication channels that travel with the user rather than residing within a single machine.

These interfaces may include:

- Voice-based interaction through natural speech

- Wearable communication devices

- Persistent personal AI accessible across environments

- Embedded or low-friction interaction systems integrated into everyday tools

The defining characteristic of the ambient interface is continuity. The system remains accessible regardless of device, location, or modality. Interaction becomes conversational rather than mechanical, shifting from operating software to communicating with intelligence.

Importantly, ambient interaction does not eliminate traditional interfaces but complements them. Visual systems will remain essential for inspection, oversight, and control, while voice and conversational interaction become the primary mode for intent expression and task delegation.

Persistence Across Contexts

Future intelligent systems will not be tied to a single device. Instead, they will operate as persistent agents that move with the user across environments:

- From home to work

- From mobile to desktop

- From voice to visual interface

- From passive listening to active execution

This persistence introduces new design challenges in identity, continuity, and trust. Users must be confident that the system:

- Remains aligned with their intent

- Maintains secure boundaries

- Operates consistently across contexts

The interface therefore becomes less about screens and more about relationship continuity.

Security and Development Agents in Autonomous Systems

As intelligent systems become more autonomous and persistent, two classes of agents will become foundational:

Security Agents

Security agents continuously monitor system behavior, permissions, and risk. Their role includes:

- Protecting identity and data integrity

- Enforcing autonomy boundaries

- Detecting abnormal or unsafe behavior

- Managing access and authorization

- Preserving user sovereignty over the system

Security becomes not a feature but a core behavioral layer of intelligent systems.

Development Agents

Development agents function as adaptive system architects, enabling intelligent systems to evolve responsibly. Their role includes:

- Monitoring performance and system drift

- Refactoring workflows and improving task execution

- Maintaining compatibility across tools and environments

- Assisting in debugging, observability, and system refinement

- Supporting human-directed system evolution

These agents ensure that intelligent systems remain maintainable, adaptable, and aligned with human goals over time.

Ambient Interaction and the VIBECT Framework

The shift to ambient interfaces does not replace the VIBECT model — it reinforces it.

As intelligent systems become less visible in physical form, Visibility, Intent, Boundaries, Explainability, and Control become more important, not less.

Trust in ambient intelligence depends on:

- Knowing when the system is active

- Understanding what it is doing

- Maintaining the ability to intervene

- Ensuring secure and bounded behavior

The future of human–AI interaction is not invisible automation, but transparent, secure, human-directed intelligence operating seamlessly across environments.

References

Amershi, S., Weld, D., Vorvoreanu, M., et al. (2019). Guidelines for Human-AI Interaction. Proceedings of the SIGCHI Conference on Human Factors in Computing Systems.

Fleming, M., & Levie, W. H. (1993). Instructional Message Design. Educational Technology Publications.

Gagné, R. M., & Merrill, M. D. (1990). Integrative Goals for Instructional Design. Educational Technology Research and Development.

Gordon, S. E. (1994). Systematic Training Program Design. Prentice Hall.

Gordon, S. E. (1995). Rapid Prototyping in Instructional Design.

Laurel, B. (1991). The Art of Human-Computer Interface Design. Addison-Wesley.

Nielsen, J. (1995). Multimedia and Hypertext: The Internet and Beyond. Academic Press.

Norman, D. A. (1988). The Design of Everyday Things. Basic Books.

Park, I., & Hannafin, M. J. (1993). Empirically-Based Guidelines for the Design of Interactive Multimedia. Educational Technology Research and Development.Intelligence.